Introduction

For the better part of two decades, the data center industry lived by a simple mantra: “Ping, Power, and Pipe.” If a provider could guarantee a network signal (ping), steady electricity (power), and a fiber connection (pipe), they were considered a top-tier facility.

How Modern Data Centers Go Beyond Ping-Power-Pipe withEnergy-Efficient Infrastructure defines the new industry standard. The rapid rise of Generative AI and real-time processing demands a total transformation of old models.

Today, businesses reject basic space in favor of high-density environments that stay smart, sustainable, and cost-effective. Managers now shift their focus from simply “keeping the lights on” to actively “optimizing every watt.”

The Evolution from Infrastructure to Ecosystem

In the old model, data centers were essentially high-tech warehouses. In 2027, they are complex ecosystems. The traditional “ping-power-pipe” setup was built for a world where server racks used $5kW$ of power. Today, racks packed with AI-focused GPUs can demand upwards of $100kW$.

This massive jump in Power Density means that basic infrastructure can no longer handle the load. To stay competitive, modern facilities have had to reinvent how they manage energy, cooling, and connectivity.

Understanding the New Efficiency Metrics: PUE and WUE

In the modern data center landscape, efficiency isn’t a guess—it’s a measurable science. To understand how a center goes “beyond,” you have to look at its Semantic Entities, specifically the metrics that define its environmental footprint.

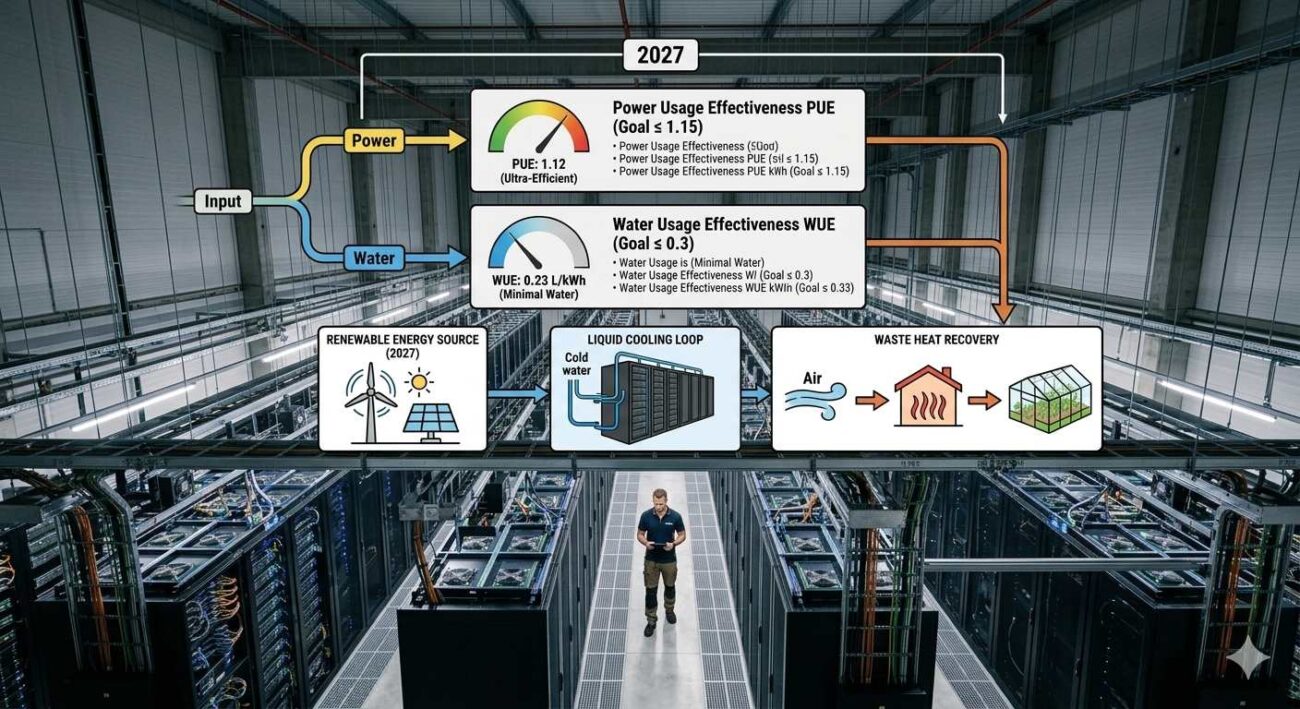

Power Usage Effectiveness (PUE)

PUE is the industry standard for measuring energy efficiency. It is the ratio of the total amount of energy used by a facility to the energy delivered to the computing equipment.

- The Old Way: A PUE of 1.7 meant that for every 100 watts used by a server, another 70 watts were wasted on cooling and lighting.

- The 2027 Way: Modern centers aim for a PUE of 1.2 or lower. This level of efficiency is only possible by going beyond basic “power and pipe.”

Water Usage Effectiveness (WUE)

As climate awareness grows, WUE has become equally important. It measures how many liters of water are used per kilowatt-hour of IT power. High-efficiency centers now use “closed-loop” cooling systems that recycle water indefinitely, bringing their WUE close to zero.

High-Density Cooling: The End of the Big Fan

One of the biggest shifts in “Going Beyond” is how we handle heat. Traditional air cooling—essentially giant fans blowing cold air—is no longer effective for high-performance computing.

The Rise of Liquid Cooling

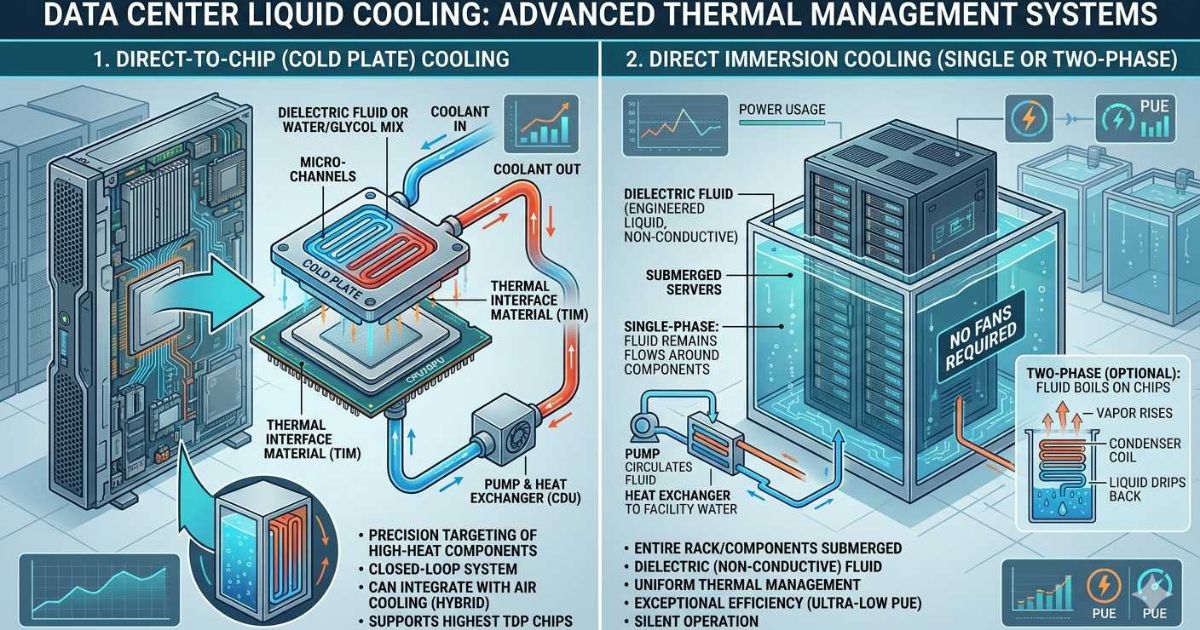

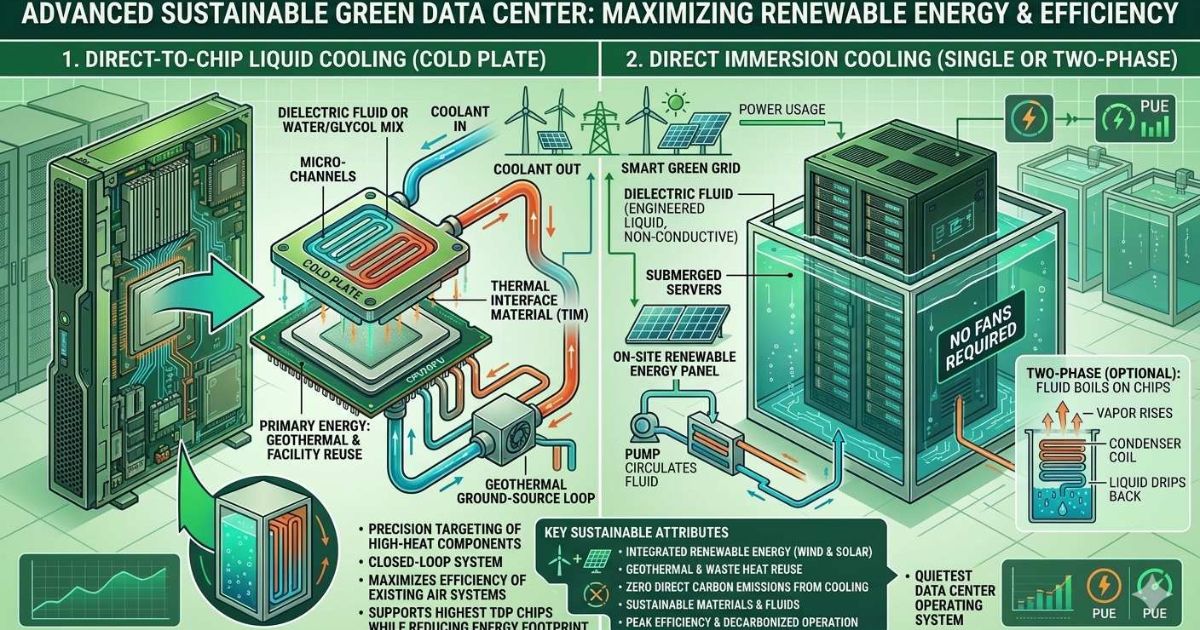

To manage the high Thermal Design Power (TDP) of modern chips, centers are turning to two main liquid solutions:

- Direct-to-Chip Cooling: A cold plate is attached directly to the CPU or GPU. Liquid flows through the plate, absorbing heat far more efficiently than air.

- Immersion Cooling: Servers are submerged in a non-conductive, “dielectric” fluid. This allows for massive power density and nearly silent operation, as fans are no longer required.

Fiber Optics: The High-Speed Nervous System

The “Pipe” part of the old model has also evolved. While copper was once the king of the data center, it has been almost entirely replaced by High-Density Fiber Optics.

Why Fiber is a “Green” Technology

- Zero Heat Generation: Copper cables get hot when they carry electricity. Fiber uses light, which generates no heat, reducing the building’s overall cooling load.

- Airflow Efficiency: Fiber cables are significantly thinner than copper. In a crowded data center, thin cables allow for better Airflow Optimization, meaning the cooling system doesn’t have to work as hard to push air through the racks.

- Scalability: A single pair of fiber strands can carry more data than a massive bundle of copper, allowing data centers to grow without cluttering the facility.

Six Ways Data Centers Provide Value in 202

To move beyond being a commodity, modern data centers now offer these six advanced services:

- Software-Defined Networking (SDN): Customers can manage their connections through a software dashboard, allowing them to spin up 400G “pipes” in seconds.

- Edge Computing Integration: By placing smaller centers closer to end-users, providers can offer lower latency for autonomous vehicles and 6G devices.

- Predictive AI Maintenance: Centers use AI to monitor “health signals” from hardware, predicting a power failure or cooling leak before it actually happens.

- Heat Recycling: Leading-edge centers capture the waste heat from their servers and sell it back to cities to provide District Heating for nearby buildings.

- Smart Power Purchasing: Modern centers participate in “Demand Response” programs, using on-site battery storage to help stabilize the public power grid during peak hours.

- Hyper-Personalized Security: Moving past simple badges, modern facilities use AI-driven biometrics and “Human-in-the-Loop” monitoring to ensure data safety.

Sustainability as a Business Strategy

In 2027, being “Green” is a financial requirement. Between carbon taxes and high energy prices, an inefficient data center is a failing data center. By focusing on Sustainable Waste Practices and hardware longevity, modern facilities are lowering their operational costs. This allows them to offer better pricing to their customers, proving that what is good for the planet is also good for the bottom line.

Conclusion

The transition from the “Ping-Power-Pipe” model to a high-efficiency ecosystem is the most significant change in the history of IT infrastructure. By embracing Semantic Entities like PUE, liquid cooling, and software-defined systems, modern data centers have become much more than just a place to put a server. They are the efficient, high-speed engines of the modern world.

As a business, choosing a data center that “goes beyond” ensures that your infrastructure is future-proof, sustainable, and ready for the AI-driven challenges of tomorrow.

FAQ

What is the biggest flaw in the old Ping-Power-Pipe model?

The old model focused only on availability, not efficiency. It ignored the massive amount of energy wasted on cooling and the limitations of air-based heat management.

How does a PUE of 1.2 help my business?

A lower PUE means the data center is more efficient. This usually results in lower “pass-through” energy costs on your monthly bill and helps your company meet its environmental (ESG) goals.

Why is liquid cooling becoming the standard?

Modern AI chips generate so much heat that air cooling can no longer keep them at a safe operating temperature. Liquid cooling is more compact and 25 times more efficient at moving heat.

What is the role of AI in modern data center management?

AI is used to optimize cooling systems in real-time, manage power distribution, and predict when physical equipment might fail, ensuring better uptime and lower waste.